Hardware Accelerated Transcoding in Kubernetes with Intel Integrated Graphics

Posted on August 18, 2019

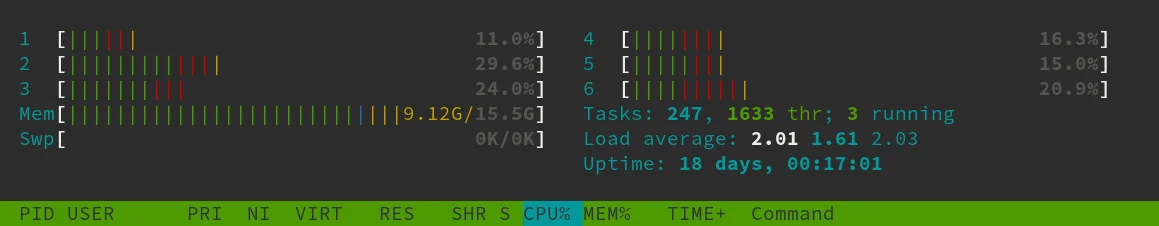

This comes as a follow up to my previous post on deploying Jellyfin media server in Kubernetes. This Jellyfin setup was very reliable and easy to maintain however it struggled whenever transcoding was required. The CPU on the worker node (i5 8500T) was going over 100% load with all six cores being used whenever transcoding for a single stream as you can see in the below screenshot. This limited the number of simultaneous transcode streams to 1 which is not ideal. This node is also used for a number of different services so clogging up the CPU was not a great idea. 4K CPU transcoding was not what I would consider a watchable experience with plenty of stutters and dropped frames.

CPU transcoding 1080p file to 720p

Jellyfin does have limited support for hardware accelerated transcoding which would solve my transcoding issues. Hardware acceleration was not available in my setup however as Jellyfin was running within a pod that didn't have access to node's iGPU. I had seen that it was possible to pass Nvidia GPUs to Kubernetes pods so I started looking into the possibility of doing the same with the Intel integrated graphics that were part of my cluster at home.

I quickly came across Intel Device Plugins for Kubernetes on Github which included a GPU device plugin. I am just going to give a quick overview of the process as the documentation in the repo is very good for getting you up and running. There are a couple of prerequisites such as a working Go environment, a recent version of docker (the version that ships with Fedora is too old to build the image) and a kubernetes cluster. First you have to build the GPU device plugin container image. It is a good idea to push this image to a container registry such as harbor that is accessible by all of your cluster nodes. This GPU device plugin image is rolled out to each of your nodes using a DaemonSet definition that is located in the repo at deployments/gpu_plugin/gpu_plugin.yaml. As this is a DaemonSet it will be deployed to all of the nodes in the cluster - keep this in mind if you have some AMD based nodes or even ARM nodes in your cluster. You may have to write a deployment definition with nodeSelectors which correspond to the Intel nodes in your cluster. Once you have the GPU device plugin pods deployed to your nodes you should be able to see that the following labels have been automatically added to your nodes.

$ kubectl describe node | grep gpu.intel.com

gpu.intel.com/i915: 1

gpu.intel.com/i915: 1

This makes it very easy for pods to use the integrated GPU which is what is needed to enable hardware accelerated transcoding in Jellyfin. All I had to do was add the above label to the resource section in the values.yaml in the Jellyfin helm chart and run a helm upgrade .... This made the Intel iGPU available to the Jellyfin pod. Hardware acceleration can be enabled in the Jellyfin admin dashboard using VA API (Video Acceleration API).

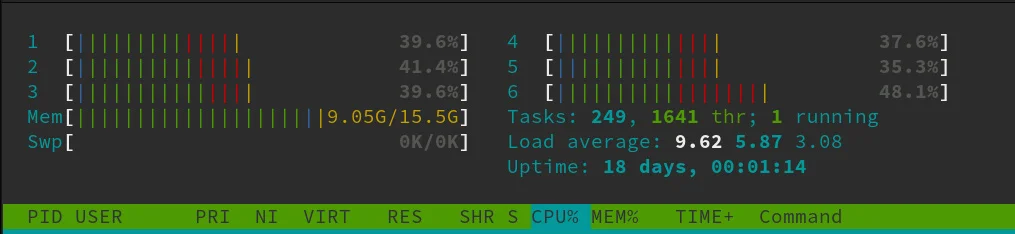

I was very impressed with the difference that this made. The CPU load reached a maximum of 25% while transcoding the same 1080p file which would have previously gone over 100% usage with CPU transcoding. I was also able to get five transcoded streams working simultaneously without issues which would of been impossible with my previous setup - probably could of tried getting more but for my usage I was happy enough with five. Below you can see a htop screenshot while transcoding the same 1080p file from above. The CPU load is much more comfortable thanks to the hardware acceleration.